Music to My Eyes

See It. Feel It. Live It. Because everyone deserves music.

- TL;DR - This was my final project for User Interface Design (CS 160, UC Berkeley). The theme was accessibility; my group chose to make an application that provides visual and haptic representations of music for people that are hard of hearing. We decided to use colorful patterns and vibrations to represent songs. We also included the ability to import music from the users' music library, create playlists, and visualize live sounds from the user's environment using microphone input. Below, I outline our design process, user testing, competitive analysis, and final product.

Motivation

People who are hard of hearing, especially those with complete deafness, face limitations in their ability to access and experience music. This is where our application comes in. Music to My Eyes aims to increase accessibility to music by generating audio visualizations of waveforms from the user’s music library, or the sounds around them through microphone input. With Music to My Eyes, users can also easily access lyrics and vibrations so they can experience their music through multiple senses.

Brainstorming

Process

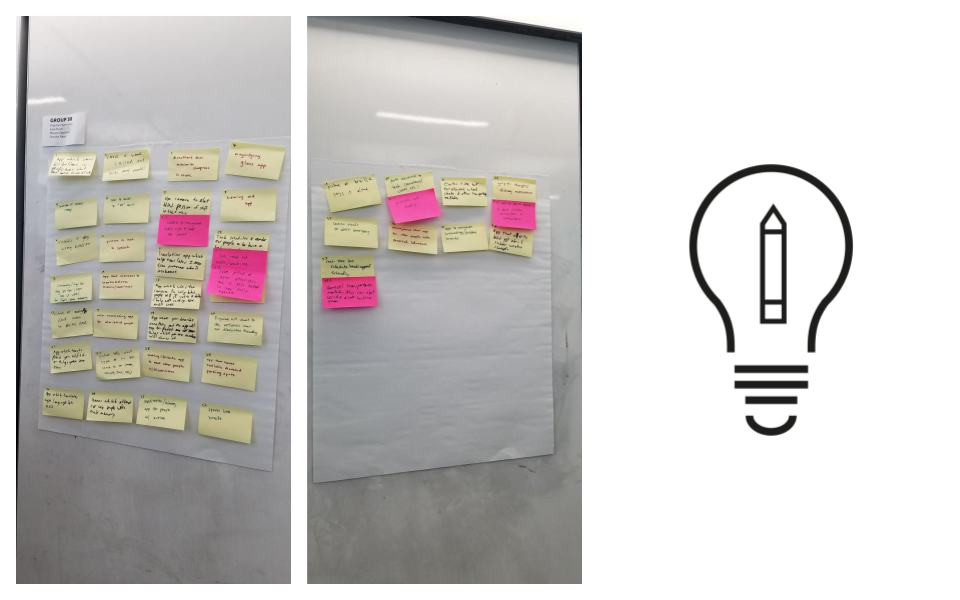

We began this project with a plethora of ideas centered around the theme of accessibility. As we narrowed down from our list of 50 ideas, we found that Music to My Eyes was an idea that our group had the time, resources, and interest to realize. Not only did we have an idea of how to bring this project into reality, but we also had a clear vision for how this idea would benefit the community. Therefore, we chose to pursue Music to My Eyes as our final project for the semester.

Top Three Ideas

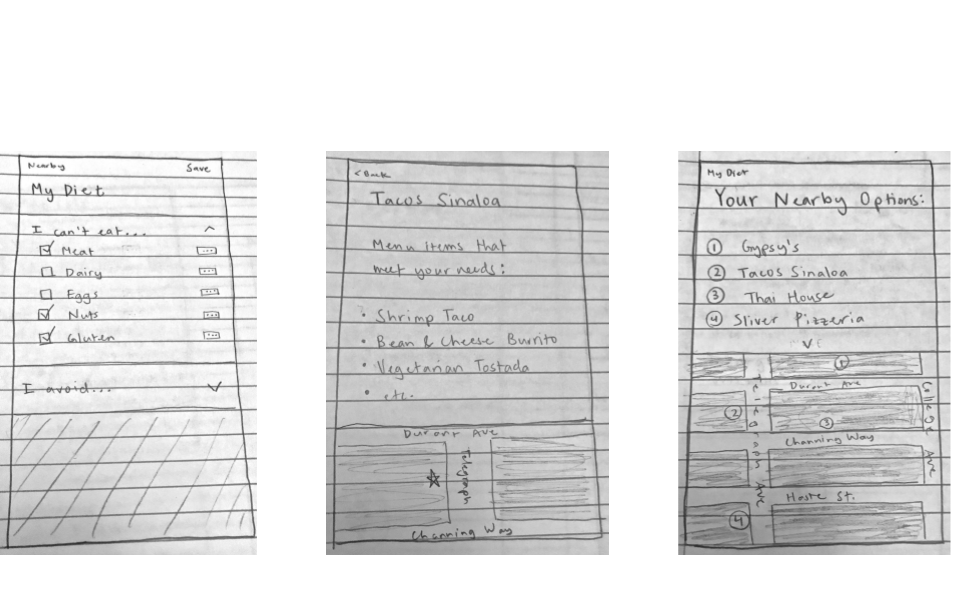

EdAble: A Yelp-like application that would cater to individuals with dietary restrictions and allergies. Individuals could filter restaurants and menus for their own needs, and restaurants can access these restrictions to get their orders right.

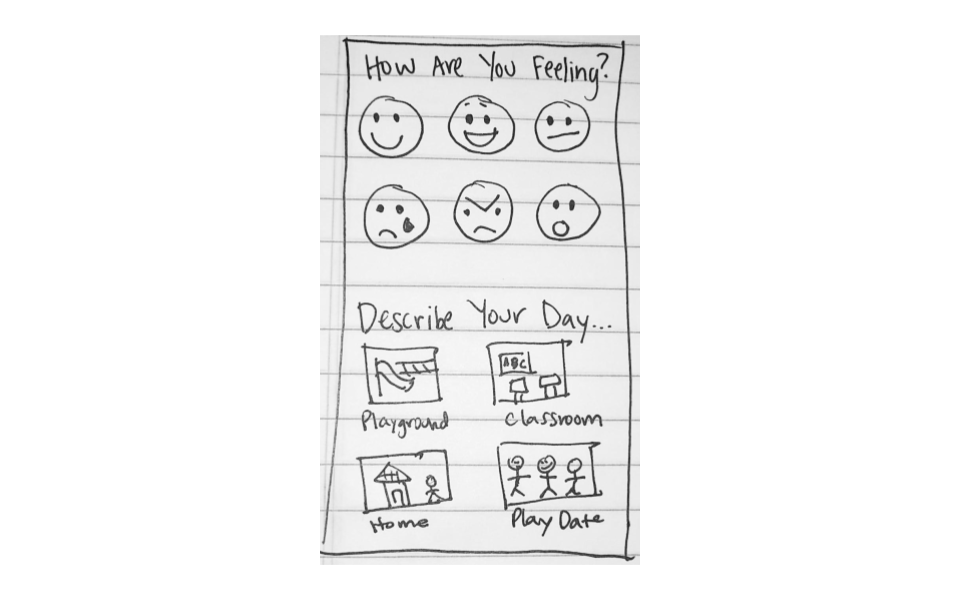

Emotions App: An app to help children on the autism spectrum communicate by displaying everyday emotions, moods, and common events for them to choose from and show their teachers. This would be useful for classroom conflict resolution.

Music to My Eyes: Our original idea was to map sound frequencies and pitch to certain colors, and display songs through color. With further brainstorming, we iterated towards our final idea of a single color visualization that uses a waveform design to convey volume and frequency.

Scenarios

Early in our design process we came up with many scenarios in which users could utilize our app. Here are a few that justify some of the features we have included:

- While walking to class, waiting for the bus, or in their free time, the user can pull out their phone to watch the visualizations and follow along with lyrics to their favorite songs.

- If the user is studying and doesn’t want to actively look at their screen, they can turn on vibrations, put their phone in their lap or pocket, and experience music through haptic feedback.

- If the user is at a party, concert, or music event with friends, they can use our Live Microphone feature to generate real-time visualizations and vibrations of the sounds around them, and take part in the fun.

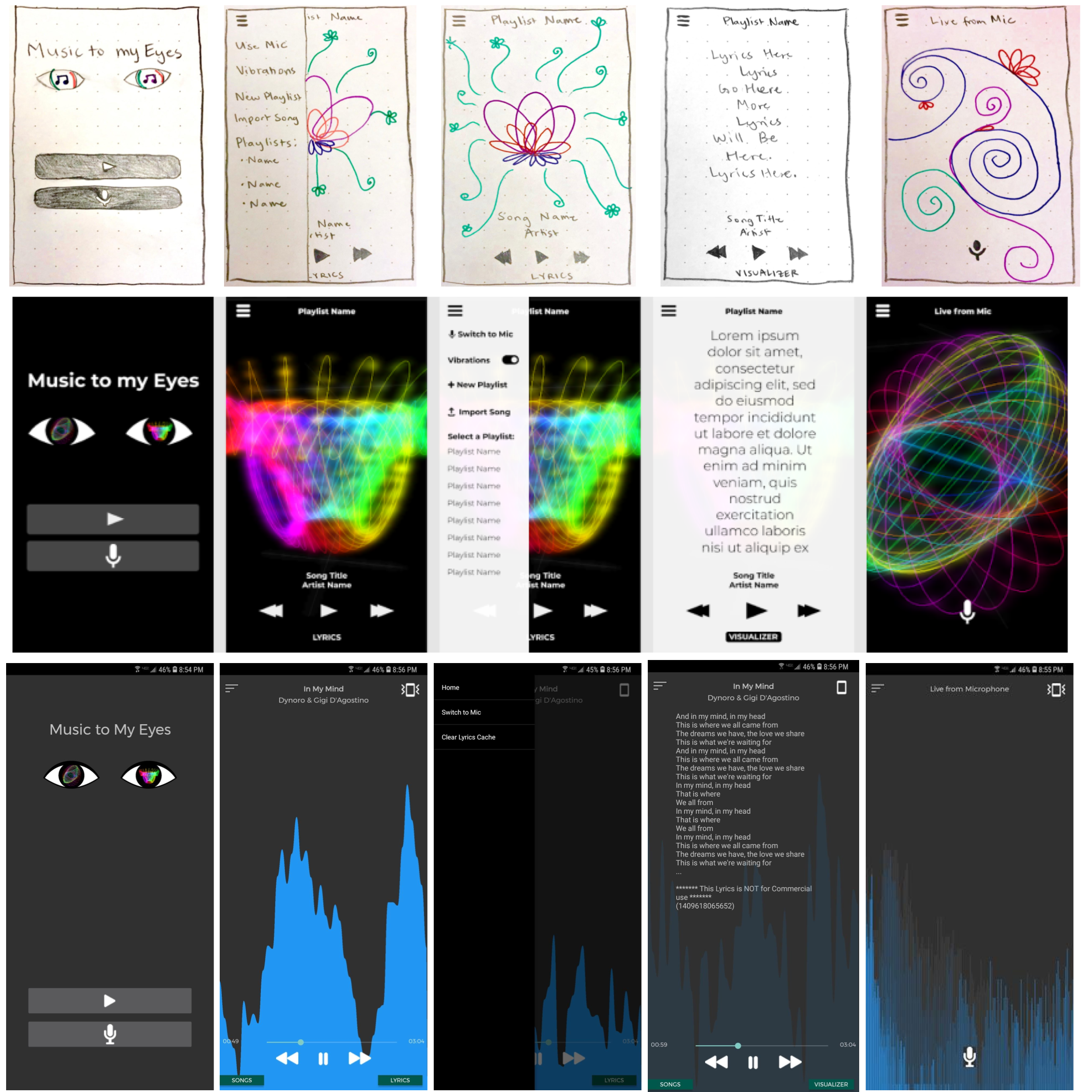

Low-Fidelity Prototypes: Paper Sketches

First Iteration

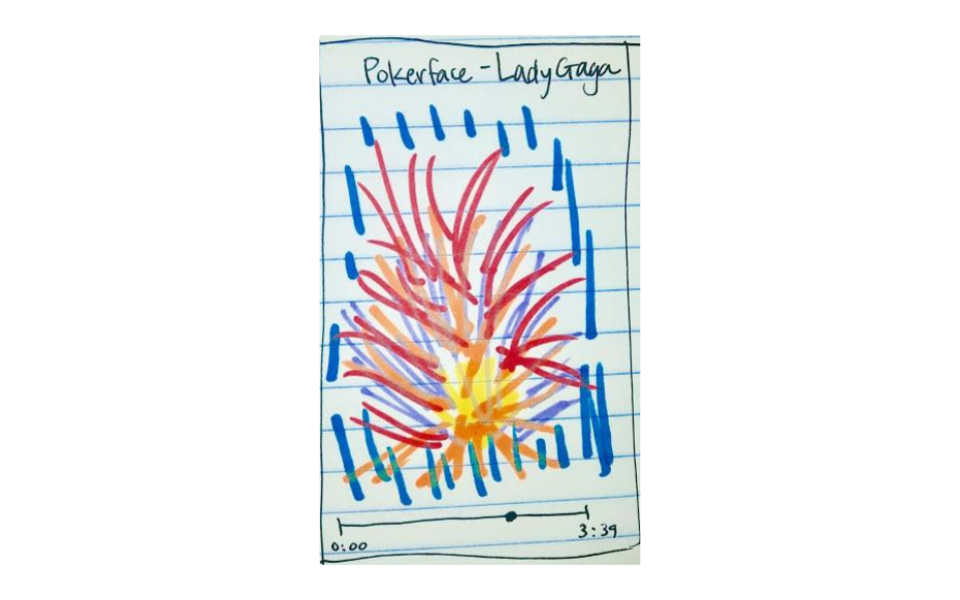

Our very first sketch was a simple one-view application with just the visualization, song title and artist, and a bar to show how much time has elapsed in the song.

For our next iteration we wanted to clarify how to select and begin a song, and provide affordances for the other features we wanted to include in the app.The Next Iteration

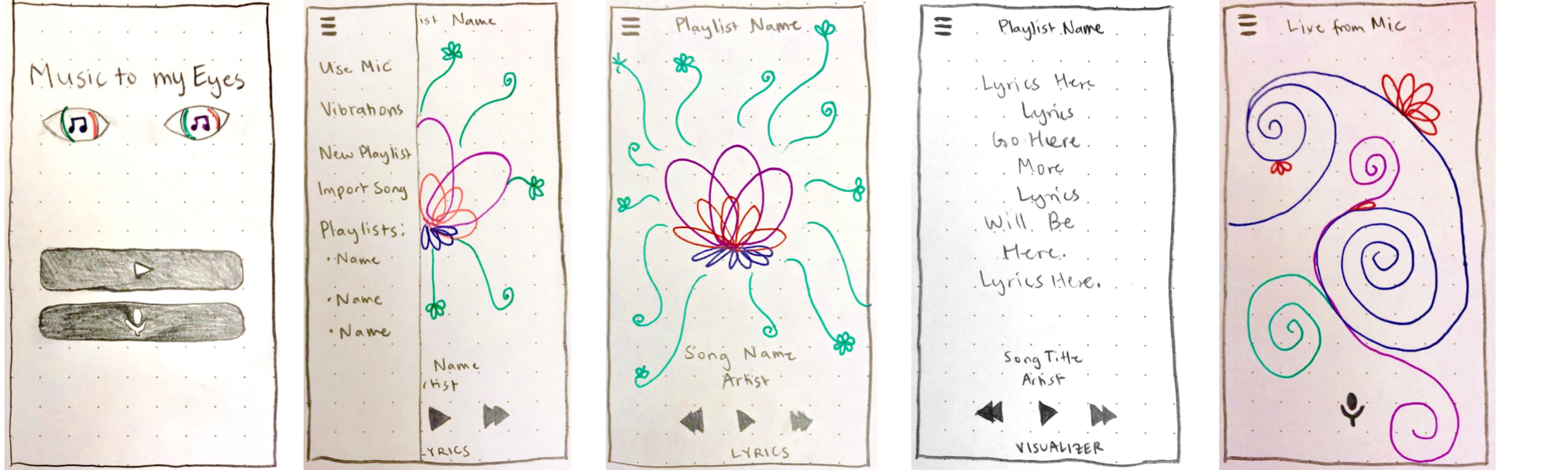

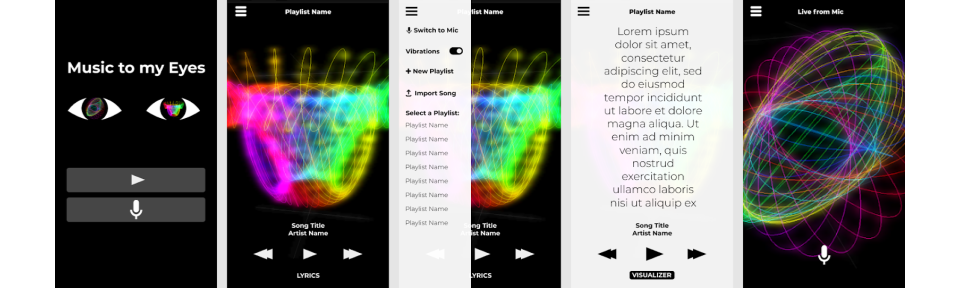

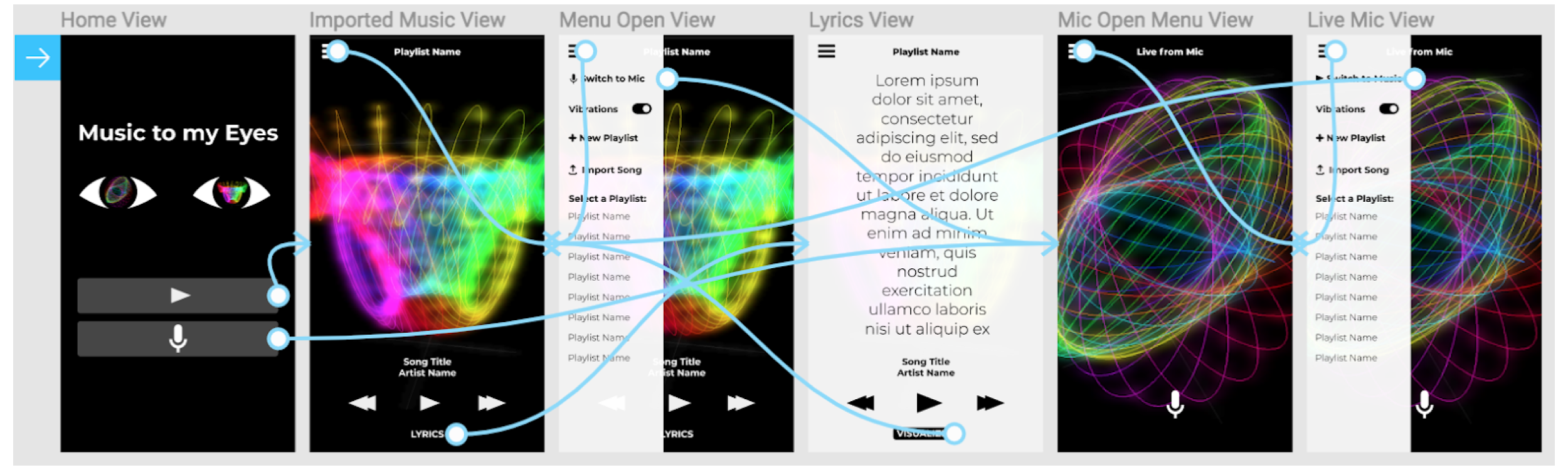

The next iteration design shows the main screens of our application. Our Home View presents the options of either playing music, or using the microphone to visualize live input. The Music View shows the music player, which contains visual patterns to represent the music. Next, the Menu Drawer shows how navigation between modes work. For example, from the Music View, you can go to Microphone View, Turn On/Off Vibrations, Create New Playlists, etc. Next, the Lyrics View shows the lyrics of the current song. Finally, the Microphone View shows live input of the user's environment using the mic.

Complete Functionality

Next, we refined the functionalities of each screen:

- Main Screen: Described above as the "Home View", the user will see this screen when they launch the app. You can select play songs or display sounds from the mic here.

- Song Visualizer: Described above as "Music View", this view will display the cool designs from the music. You can play/pause and go to the next song and previous song. You also see the song title and artist plus can click the lyrics button for lyrics.

- Menu & Settings: Described above as "Menu Drawer", from this menu, you can switch to view of the mic input, toggle on vibrations, create a playlist, import songs, or select a playlist you have created.

- Lyrics View: This is the view of the lyrics of a song with the playlist name. You will still have access of going back to the visualizer, play/pause, skip, and previous. You can also see the song title and artist.

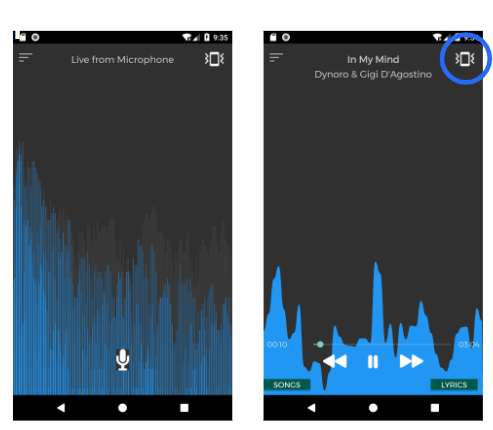

- Mic Visualizer: This is the live "Microphone View". It displays a different pattern than the song visualizer since it will be taking in the sounds caught by the microphone. You can click the microphone at the bottom of the screen to start/stop listening.

Mid-Fidelity Prototypes: Figma

Visual Design and Primary Tasks

We defined three primary tasks for our application:

- Song Visualizer: Captivating design based off of the current song being played. This is one of the main features of the app which will be most likely displayed most of the time.

- Lyrics View: Lyrics which you can follow along with. You can also fade the lyric’s view so you can also see the song visualizer in the background. We thought this was also an important view since the user may want to read what the actual words are to the song.

- Mic Visualizer: Inventive design of the sounds being recorded by the microphone. This is another important view since the user could go to a concert and then listen to it using this view.

Interaction Design

Clicking the play or mic buttons will take the user to the Music or Live Mic Views, respectively. From either of these views they can use the hamburger menu icon in the top left to open a side-panel menu. From this menu they can toggle vibrations, switch to other views, or choose a playlist. From the Music View, they can also click “Lyrics” to open the Lyrics View, and “Visualizer” to switch back.

User Testing

Users

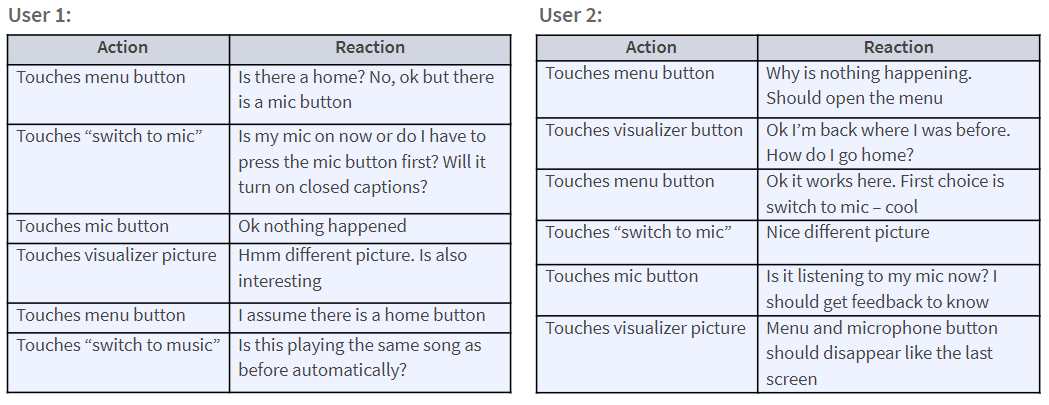

User feedback came from two users that we had previously interviewed.

- User 1: UC Berkeley student. 19 years old. Has minor hearing disabilities that were developed when they were very young.

- User 2: UC Berkeley student. 21 years old. Has had moderate hearing disabilities since birth.

Testing Environment And Procedure

The interviews were conducted at various locations on campus that provide privacy and minimal distractions.

We opened the figma wireframe on our computer and told the user to perform three different tasks while saying aloud any thoughts or feelings that come to mind.

We first told the user to play a song and interact with the visualizer. We then told the user to interact with the lyrics section. We finally told the user to switch to the microphone option and get the app to start listening.

We took notes on their actions/reactions on a blank piece of paper.

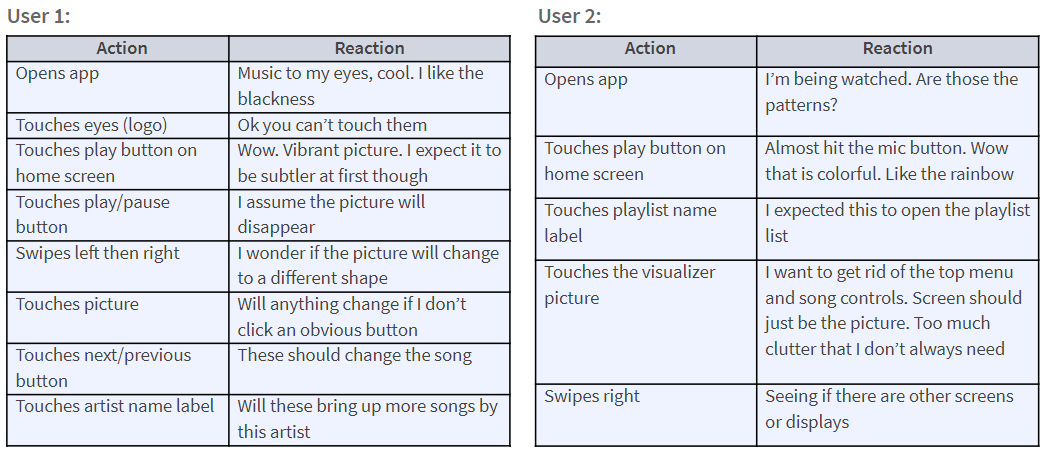

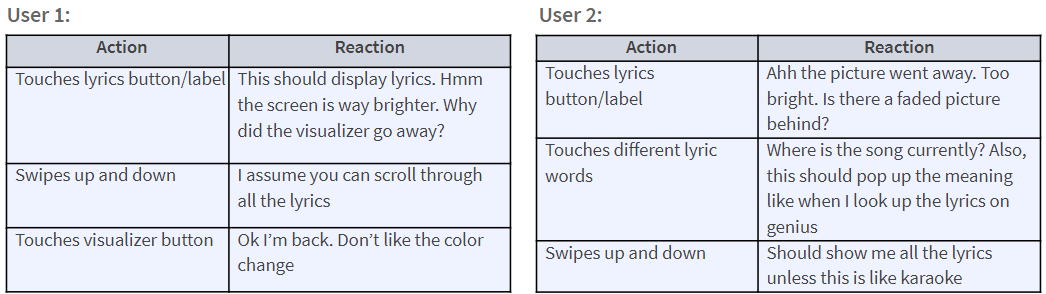

Critical Incidents

We noted some critical incidents while the users interacted with our application.

Song Visualizer

Lyrics View

Microphone Visualizer

Results

From our usability tests, we learned a few strengths and weaknesses of our application.

Strengths

- Good minimal design.

- Black color lets the vibrant colors of the visualizer stand out.

- Different visualizer displays.

- Easy to understand menu.

- No hidden controls - everything is intuitive.

- Easy to recognize which section you are currently in.

Weaknesses

- Some labels and images are unclear if they should - or can - be clicked.

- Fat finger problem for some of the buttons.

- Lyrics section changes the feel of the app.

- Lyrics not displayed with the visualizer also in view.

- User feels trapped by not having a home button even if all functionality from the home button is readily available.

- Microphone section is unclear if the microphone is currently on or off.

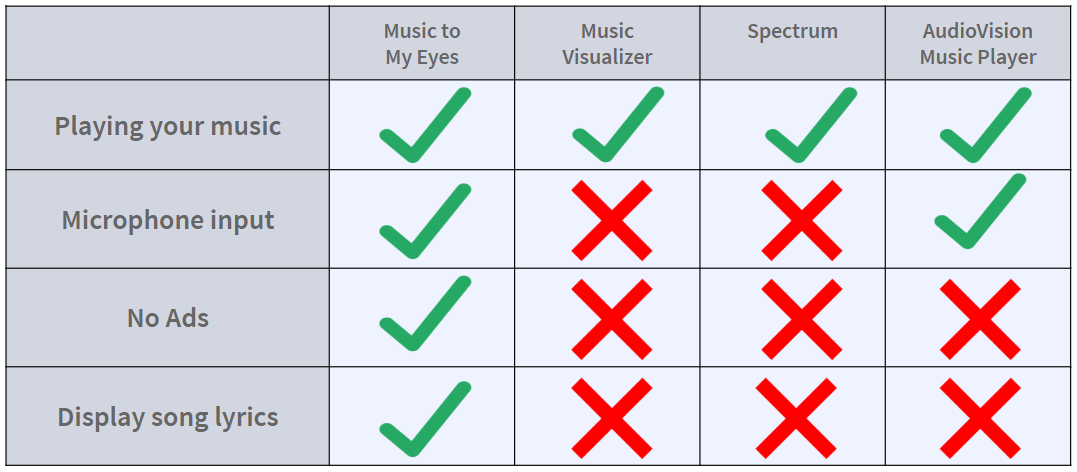

Competitive Analysis

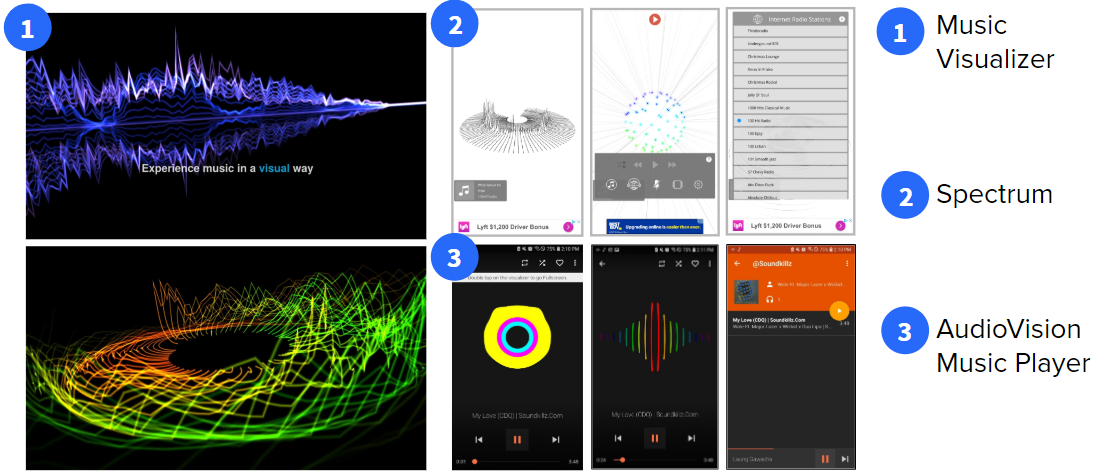

Music Visualizer

We did some research on this application. You can find it here.

Target User Group

The target user seems like someone who would just want to see cool designs/make a music video with where the visuals are not necessarily fully correlated. This differs from our users since we want to make designs which represent the music and also vibrate to depict certain aspects of the song.

Functionality

This app offers multiple different layouts of visuals which are used to display the music which is playing. The functions also seem very limited in a subset of what the programmer has set out. Ours will offer different visual layouts and will be more representative of the songs plus more customizations like personal songs and microphone input. It will also be able to vibrate in certain ways for different parts of songs as another indication to the user of what is going on.

Usability

Hard to use since much of the settings and control objects are hard to find or do not even exist. Not easy to import your own songs like through the default music player. Our app is unique because it will allow the user to be able to import their own songs or use the microphone to get the sounds to visualize. It will also use the vibration engine in the phone to give users haptic feedback about specific toggleable parts of a song.

Spectrum

We did some research on this application. You can find it here.

Target User Group

The target user group is not necessarily persons with hearing disabilities; based on the comments section and app description it seems that the user base is largely people who want to create and save visualizations of their favorite music to their phone. Some users were also musicians who wanted to import their own music to the app as an inexpensive way to make a music video to put on Youtube.

Functionality

The app offers about a dozen different visualization themes that users can choose from to visualize songs from their phone’s music library, select internet radio stations, or microphone input. Users can also choose between rainbow or black and white color schemes. There is no supplementary vibration, and for the iPhone it only allows you to select from the Apple Music app, not Spotify or other streaming apps. A few reviews have complained that the users wished that the visualizer’s colors were improved, since they could only select “rainbow” or “black and white”.

Usability

The app is highly rated and users say it offers the best visualizations they’ve been able to find in an iOS app. When I downloaded the app to test it out, however, I found it difficult to navigate or use. Icons didn’t have labels or clear meanings, and to switch between visualizations the user has to swipe left to right or up and down, but there is no indication on the screen that this is something the user can do.

AudioVision Music Player

We did some research on this application. You can find it here.

Target User Group

This application offers a variety of themes, which shows that it is trying to provide users with ample visual cues. However, based on the comments section, most people who use the application use it for visuals accompanying their music. As a result, it seems as if this application is geared more towards individuals who listen to music functionally and simply want patterns accompanying their music rather than only using the application for the patterns.

Functionality

This application allows you to select music previously loaded from your device Music library and then displays several different patterns that you can choose from to match the music. You can swipe through these patterns to choose your favorite style. Some of these patterns are circles that shake or “resonate” with the beat. The songs are also displayed by album, which makes it difficult to find specific tracks.

Usability

This application is difficult to use because there is not a lot of information regarding how to interpret the patterns. Different patterns appear to differentiate between voice and beat, but there are no indicators to tell the user this, they must discover this feature on their own. It would be more convenient if the patterns had descriptions or were more consistent. This application would also be more convenient if it would allow users to select tracks instead of albums.

Feature Breakdown

The applications we found were not geared towards people with hearing disabilities. This was clear from their lack of lyrics, which are key in helping hard of hearing users feel like they are experiencing music similarly to their friends. They provide complex and often confusing visual patterns and interfaces with no instructions guiding users’ understanding. Additionally, each application violated usability heuristics that we intend to comply with – so that users can simply enjoy their music.

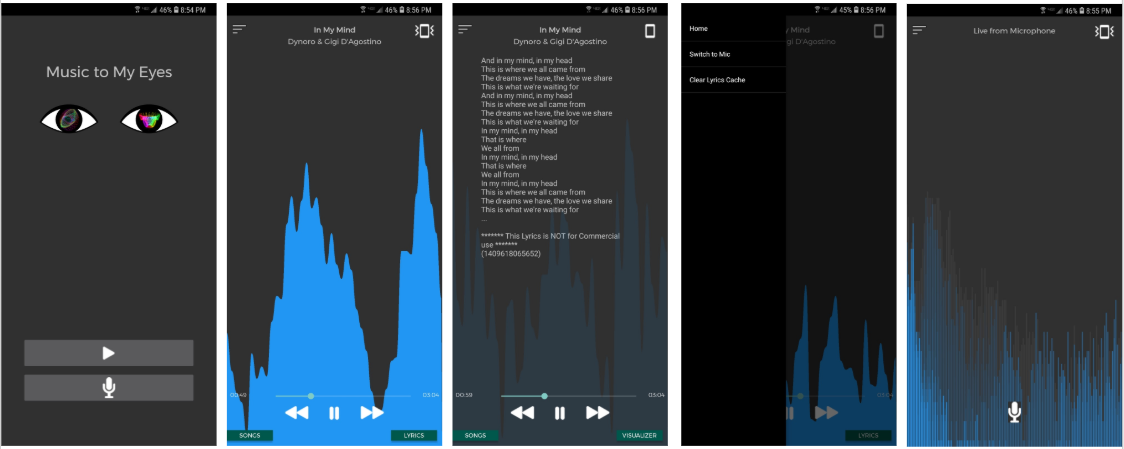

High Fidelity Prototypes: Android Studio

Visual Design

We implemented some overall design changes. We received feedback from our mid-fidelity designs that the background was too black and text too white, so in our high fidelity prototype we transitioned to a dark grey background and light grey text. This is easier on the eyes, and distracts less from the visualization itself. We also relocated the vibration toggle to the main screen, so clicking the icon at the top right now allows users to turn vibrations on or off.

We also implemented some changes to the individual screens:

- Song Visualizer: Song title and artist name have been relocated to the top of the screen The slider bar has also been reintroduced. The ‘Songs’ button lets users select titles from their music library.

- Lyrics View: The visualization is now visible in the background of the lyrics, so the user can enjoy both features simultaneously.

- Mic Visualizer: The new Songs button pulls up a list of all the songs stored on the device, and displays the song title and artist. This adds clarity our previous design was lacking, to show how users can select the music they want to listen to.

Design Evolution

We made several changes in design to improve user experience:

- Dark background to enhance visualization.

- Songs button to access all tracks from device easily.

- Vibration mode icon in visualization screen for easy access/navigation between modes.

- Scrub feature to move through songs.

- Clear lyrics cache feature for optimization of space.

- Simpler visualization that is equally appropriate for all songs, to meet efficiency constraint (and deadline!).

Technical Challenges

We faced some technical challenges:

- Mic Visualizer: This was the most challenging to figure out because the input from the mic is an FFT. The problem is that the visualizer needs a wave form. Because of this, I had to figure out how to either translate the data into the correct format to the visualizer or build a new visualizer to handle the new data. I ended up choosing the latter because I wanted to keep the mic visualizer in real time. In the end, I had to perform some complex (i) math to create points which were then connected using the built in bar graph.

- Vibrations: Vibrations was also difficult because I had to take in the raw data which was given by the music player or mic into vibrations. I ended up having to use a newer minimum sdk that what I wished to use because of the vibrations. The reason this also became hard is I cannot test vibrations in the emulator plus most android devices do not have that good of a vibration engine. I had to determine how the vibrations should feel so that you could tell the difference in the sounds. For the most part, they feel similar though there are minute details which you can distinguish between songs.

Technical Supplements

All of the different APIs which the project uses are built into the gradle files. The API key for the lyrics API is built into the code so one would have to get an api key from Musixmatch and paste it to set the variable MusixMatch_KEY in the file located at com.cs160_fa18_30.musictomyeyes.api.ApiUtils. We currently have an api key set though which can be used but you may want to get your own api key. After that, the project can be built like normal through android studio. When using the app, the user must give permission for audio recording regardless of if they are going to use the Recording Activity. This is because the visualizer require this permission to work. The app also requires file access so that it can fetch the music on the device to play. Other than what was specified above, there is nothing extra to do to get the app to work other than just the basic installation of the APK.